The Promise Was Empowerment. The Reality Is Mass Data Exposure.

Over 5,000 web applications built with AI coding assistants are currently leaking sensitive data to the public internet. Not because of sophisticated hacks or zero-day exploits—but because these tools made it so easy to build apps that nobody thought about authentication.

Security researcher Dor Zvi and his team at RedAccess spent weeks scanning applications built with Lovable, Replit, Base44, and Netlify. What they found should terrify anyone who understands how production systems work: medical records, financial documents, customer service logs, and corporate strategy presentations—all accessible to anyone with the URL.

This isn’t a vulnerability in the AI models themselves. It’s far worse: it’s what happens when you remove the friction from software development without teaching people why that friction existed in the first place.

What The Press Got Wrong

Most coverage frames this as an AI safety story—another case of automation introducing bugs. That completely misses the point. These applications don’t have bugs. They have no security architecture at all.

The real story is about the collision between two incompatible promises. Promise one: “Anyone can build software now.” Promise two: “Building production software requires understanding authentication, authorization, data classification, and threat modeling.” These cannot both be true.

When you ship a web app in 2026, you’re not deploying to localhost. You’re deploying to Cloudflare’s edge network with a globally routable URL that gets indexed by search engines and crawled by bots within hours. The old developer workflow—local testing, staging environments, security reviews—existed because deploying to production was expensive and slow. AI coding tools optimized away that entire learning curve.

How This Actually Happens

Here’s the technical reality that nobody wants to discuss: platforms like Lovable and Replit generate fully functional web applications with backend APIs, database connections, and public URLs in under five minutes. The AI writes the authentication code too—it just doesn’t enforce it by default.

Zvi’s team found that roughly 40% of scanned applications exposed legitimately sensitive data. But here’s what makes this different from traditional security research: the exposure wasn’t caused by misconfiguration of complex cloud infrastructure. It was caused by users accepting the default settings of tools explicitly marketed as requiring zero configuration.

The workflow looks like this: User prompts “Build me a customer service dashboard.” The AI generates React frontend, Node.js backend, Supabase database connection, authentication middleware—the works. User clicks deploy. Application goes live at predictable-subdomain.lovable.app. No one ever tests whether authentication actually prevents unauthorized access.

According to CSO Online’s analysis, this pattern repeats across the entire AI coding ecosystem. The tools generate security code because their training data includes it, but they can’t enforce its correct implementation.

The Predictable URL Problem

Lovable apps deploy to lovable.app subdomains. Replit uses replit.app. Netlify gives you netlify.app. All highly guessable. All indexed by search engines. All discoverable through basic enumeration.

RedAccess didn’t need sophisticated scanning infrastructure. They wrote a simple script that checked sequential subdomains and common patterns. Every application that returned a 200 OK response without requiring credentials went into the findings list. The total scanning time? About 72 hours of compute.

This is the cybersecurity equivalent of finding that thousands of houses in a new subdivision were built without locks because the prefab construction company assumed buyers would install them later. Except in this case, the houses contained medical records and corporate financial data.

Why Authentication-By-Default Doesn’t Exist

You might ask: why don’t these platforms force authentication on every deployed application? The answer reveals the fundamental tension in the business model.

These tools sell on frictionlessness. Their core value proposition is “idea to deployed app in five minutes.” Adding mandatory authentication adds friction—users need to configure OAuth providers, set up auth flows, test login/logout, handle password resets. That’s 30 minutes of additional work that breaks the magic moment.

I’ve shipped similar tools at Google. Product analytics are brutal: every additional required step in the deployment flow cuts conversion rates by 20-30%. When you’re competing against Replit and Vercel and a dozen other platforms, being the one that forces users to configure Clerk or Auth0 before deploying is a death sentence.

So the platforms made a bet: ship without mandatory security, rely on users to add it themselves, handle the PR crisis when researchers inevitably find exposed data. That bet is now coming due.

The Data That’s Actually Leaking

Zvi’s team found exposed applications containing:

- Patient medical histories and treatment notes from healthcare providers testing internal tools

- Bank transaction logs and account balances from fintech prototypes

- Complete customer service conversation histories including names, emails, and support tickets

- Corporate strategy presentations with revenue projections and M&A plans

- HR systems with employee performance reviews and salary data

None of this data was encrypted at rest (beyond default database encryption). None required credentials to access. Most applications had admin panels accessible via /admin or /dashboard routes that anyone could visit.

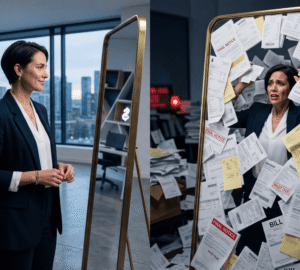

The most damaging cases weren’t startups experimenting with internal tools—they were enterprise employees using AI coding platforms to “quickly spin up a dashboard” for their team, inadvertently publishing the entire company’s Q3 financial results to the public internet.

Who Actually Wins and Loses

Winners:

Security consulting firms are about to have their best year ever. Every company that used these tools now needs incident response, forensic analysis, and security audits. Expect the big four consulting firms to launch dedicated AI-coding security practices by Q3.

Traditional PaaS providers like Heroku and AWS Amplify suddenly look good again. Yes, they require more configuration, but their defaults assume you’re shipping production software that matters. That assumption is now a feature, not a bug.

Identity providers like Clerk, Auth0, and Supabase Auth will see integration demand spike. Every AI coding platform will rush to add “deploy with authentication” as a one-click option. These companies will be acquisition targets within 18 months.

Losers:

AI coding platforms face an impossible choice: add mandatory security (and lose their core differentiator) or maintain the status quo (and face regulatory action). Lovable and smaller players probably get acquired by companies with compliance infrastructure. Replit and Netlify survive because they have enterprise tiers with real security controls.

Every company that deployed internal tools using these platforms now faces potential GDPR violations, HIPAA breaches, and SOC 2 compliance failures. Budget for legal fees, regulatory fines, and customer notifications. If your company used Lovable or Replit for internal tools in 2024-2026, your Q2 is about to get expensive.

The dream of “everyone can code” takes a major hit. This incident proves that software development expertise isn’t about writing syntax—it’s about understanding the operational and security context where code runs. You can’t automate away that knowledge without consequences.

What Actually Changes

Within six months, expect every major AI coding platform to implement:

- Mandatory authentication on deployed applications (breaking the entire UX)

- Automatic data classification scanning (privacy law compliance theater)

- “Security score” badges that shame users into fixing obvious issues

- Enterprise tiers with SSO, audit logging, and compliance certifications

None of this addresses the root cause: these platforms made it possible to deploy production software without understanding production software. The exposed data is already exposed. The leaks have already happened. Adding guardrails now is closing the barn door after the horses have been scraped, indexed, and archived by the Internet Archive.

The Real Technical Implications

Here’s what keeps me up at night: we’re about to see a wave of applications where authentication exists but authorization doesn’t. The platforms will add forced login flows, users will implement them minimally, and we’ll discover that authenticated users can access other users’ data because nobody implemented row-level security in the database.

The tools will generate the right Postgres RLS policies—they’re trained on best practices. But users won’t know to verify that the policies actually work. We’re trading “no authentication” for “authentication without authorization,” which is arguably worse because it creates a false sense of security.

This is the real cost of abstracting away complexity: you remove the feedback loops that taught developers why security matters. When deploying to production required configuring AWS IAM roles and setting up VPCs, you learned about security boundaries by necessity. When deployment is one click, you never learn the lesson until your company ends up in a Wired article.

What You Should Actually Do

If you or your team used Lovable, Replit, Base44, or Netlify to build internal tools:

This week: Audit every application deployed through these platforms. Check for exposed admin panels. Test whether authentication actually prevents unauthorized access. Take offline anything that can’t be secured immediately.

This month: Implement proper authentication and authorization. This means real OAuth with a reputable provider, not just checking if request.headers.email exists. Add database-level security policies. Run penetration tests.

This quarter: Build internal guidelines for when AI-generated code needs security review. Spoiler: it’s “always.” Train your team on the difference between “code that runs” and “code that’s safe to run in production.”

If you’re using these platforms for customer-facing applications, assume your data is already compromised and plan accordingly. Notify affected users. File the required breach reports. Budget for the regulatory response.

The Prediction

Within 18 months, AI coding platforms will be regulated like financial institutions—mandatory security controls, audit requirements, data breach notification obligations. The era of “just ship it and see what happens” is ending, not because the industry learned its lesson, but because governments are about to force the issue.

The uncomfortable truth: making software development accessible to everyone required removing the barriers that kept bad software out of production, and we’re about to spend the next decade dealing with the consequences.